- Screaming frog seo spider alt tags how to#

- Screaming frog seo spider alt tags pdf#

- Screaming frog seo spider alt tags code#

The 4xx class of status code is intended for situations in which the client seems to have erred. This is a count of URI that link to pages that do not exist anymore. A high number of 302 redirects is a cause for concern and should be investigated further. You will sometimes see a 307 response code, with some research you’ll find that a 307 is also a temporary redirect. For SEO purposes it is still best practice to use a 301 when permanently redirecting a URL and to use a 302 redirect when temporarily redirecting a URL. Many of these status codes are used in URL redirection. This class of status code indicates the client must take additional action to complete the request. This is a count of URI that are redirecting to another URI. Response Codes Explained with Pictures via Moz.Here are some helpful resource for response codes: From an SEO perspective, we want this to have the highest count of all other response codes. The most common response code is 200, but there are 9 others that describe different types of successful response codes. This class of status codes indicates the action requested by the client was received, understood, accepted, and processed successfully. This is a count of URI with a successful response code. SEER Screaming Frog Guide by SEER Interactive.Here’s a helpful resource for resolving no response codes:

Screaming frog seo spider alt tags how to#

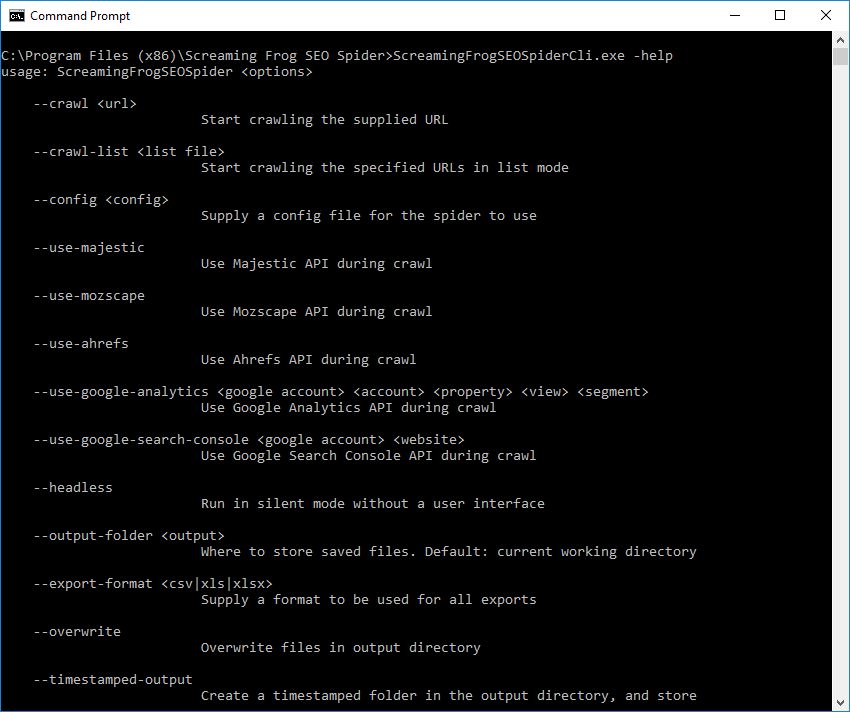

You can increase the time Screaming Frog waits for a page to return a response code, see the resource link for how to do that. As default, the SEO Spider will wait 10 seconds to get any kind of HTTP response from a URL. Typically this is because the server is slow or busy, causing Screaming Frog to timeout and move on. This is a count of URI that for some reason did not return a response code. Here’s a helpful resource for using robots.txt: But if you want a page to be indexed by search engines, it should not be blocked in the robots.txt file. Whether a URL should or should not be blocked from being indexed should be evaluated on a case by case basis. This is a count of URI that are blocked by a sites robots.txt file. 5 Common Mistakes with rel=canonical by Webmaster Central Blog.Helpful resources for controlling HTTP and HTTPS URLs: You should be in control of which URL Google is ranking. Make sure you’re forcing the correct version of the page to load, and use canonical correctly. HTTPS adds latency to page load time, so many sites have sections – login and check out carts mainly – that are secured while the rest of the site is HTTP. There is nothing wrong with having a count for both. This is a count of protocols Screaming Frog crawled. Types of plugins/content that may still use Flash.

Screaming frog seo spider alt tags pdf#

Helpful resources for improving PDF SEO & Tracking: See the resources below to improve the SEO of PDFs and track them in GA. Another downside is that you can’t add a Google Analytics tag to them. The downside is that because it is not a webpage, these files can not share the link authority they acquire. Google is very good at crawling and ranking PDFs. This is a count of PDFs Screaming Frog found during its crawl.

Here’s a helpful resource for optimizing images: This is a count of images Screaming Frog found during its crawl. Optimizing images can drastically reduce page load times. This is a count of CSS files Screaming Frog found during its crawl. Minifying CSS files and optimizing the files delivery can improve page speed. Remove Render-Blocking JavaScript by Google.Helpful resource about JavaScript standards and performance:

This is a count of JavaScript files Screaming Frog found during its crawl. Minifying JS files and optimizing file delivery can improve page speed. Helpful resource about HTML standards and performance: Also, using HTML5 elements can help search engines better understand content. For any organization thinking about utilizing Screaming Frog, I would recommend that they look closely at what need they have and whether Screaming Frogs tools and features will fill these needs.This is a count of HTML files Screaming Frog found during its crawl. Minifying HTML files can help improve pagespeed. And I would recommend anyone who does want to use it to really look at the documentation very closely.

However, there is a pretty steep learning curve with using it. Screaming Frog was very easy to onboard within our organization. As well as it provides excellent API access and it has great flexibility to specify different areas of the site to crawl. We chose to use Screaming Frog because it's a very cost reasonable solution. And the problem with that was that we could only specify large domains whereas, with Screaming Frog, we were able to specify specific pages. Prior to using Screaming Frog, we were using our enterprise SEO platform to crawl the site, and that was very web-based. For more reviews like this, you can click the links below. My rating for a Screaming Frog SEO Spider is a five out of five.